Prompting and prompt engineering? — a comprehensive introduction

Prompting and prompt engineering are easily the most in demand skill of 2023. The rapid growth of Large Language Models LLMs has only seen an emergence of this new discipline of AI called prompt engineering. In this video lets take a brief look at what prompting is, what prompt engineers do and also the different elements of a prompt that a prompt engineer works with.

What exactly is a prompt?

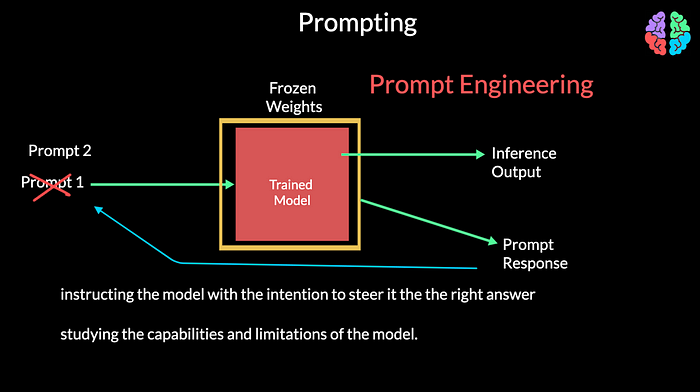

Prompt is simply the input that you provide to a “trained” model. When I say trained model, the weights of the model are fixed or frozen and are not going to change during the prompting process. You may now ask how is it different from inference because we have been training models, deploying and running inferernce on on machine learning models. The point is that with inference, the input is fixed. We never ever change it and whatever the model gives as output, we accept it as the result. Think of image classification as an example task.

However, with prompting, you are not restricted to a single input. You can tweak the input to your needs to improve the model’s behaviour. You are more of instructing the model with the intention to steer it the the right answer. While you accept the model for given when it comes to inference, with prompting, you are studying the capabilities and limitations of the model. The art of designing or engineering these inputs to suit the problem on hand so as to serve you best gives birth to a fairly new discipline called prompt engineering.

Prompt Engineering

Before diving into prompt engineering, lets understand the motivation or need to engineer prompts with examples. Lets say I want to summarise a given passage. So I give a big passage from wikipedia as input and in the end say, “summarise the above paragraph”. This way of providing simple instructions in the prompt to get an answer from the LLM is known as Instruction prompting.

Lets move to a slightly more complicated case of mathematics and ask the LLM to multiply two numbers. In this case, I am asking, “what is 23431232”. The answer I got is this which is not quite right. Now let me modify the prompt and add an extra line in the prompt, “what is 23431232. Give me the exact answer after multiplication”. We now get the right answer from the LLM.

So, clearly the quality of model’s output is determined by the quality of the prompt. This is where prompt engineering comes into play. The goal of a prompt engineer is to assess the quality of output from a model and identify areas of improvement in the prompt to get better outputs. So prompt engineering is a highly experimental discipline of studying the capabilities and limitations of the LLM by trial and error with the intention of both understanding the LLM and also designing good prompts.

Prompt elements

In order to engineer or design the prompts, we have to understand the different elements of a prompt. A prompt can contain any one or more of the following elements.

Prompts can be instructions where you ask the model to do something. In our example, we provided a huge body of text and asked the model to summarise it.

Prompts can optionally include a context for the model to better serve you. For example, if I have questions about say, English heritage sites, I can first provide a context like, “English Heritage cares for over 400 historic monuments, buildings and places — from world-famous prehistoric sites to grand medieval castles, from Roman forts … “ and then ask my question as, “which is the largest English heritage site?”

As part of the prompt, you can also instruct on the format in which you wish to see the output. And so a prompt can optionally have an output indicator. For example, you can ask “I want a list of all the English heritage sites in England, their location and speciality. I want the results in tabular format.” Or if you want even better response, you can enter the desired format with this syntax to indicate you wish to see columns and rows in the output:

Desired format:

Company names: <comma_separated_list_of_sites>

Sites: -||-

Location: -||-

Speciality: -||-

A prompt can include one or more input data where we provide example inputs for what is expected from the model. In the case of sentiment classification, take a look at this prompt where we start providing examples to show our intentions and also specify we don’t want any explanation in the response:Text: Today I saw a movie. It was amazing.

sentiment: Positive

Text: I don't very good after seeing that incident.

sentiment:

Types of Prompts — shots

This way of giving examples in the prompt is similar to how we explain to humans by showing examples. In the prompting world its called few-shot prompting. We provide high quality examples having both the input and the output of the task. This way the model understands what you are after and so responds far better.

Expanding on our example, if I want to know the sentiment of a passage, instead of just asking, “what is the sentiment of the passage”, I can provide a few example covering the possible classes in the output. In this case positive and negative:

Text: Today I saw a movie. It was amazing.

sentiment: Positive

Text: I don't very good after seeing that incident.

sentiment: Negative

Text: Lets party this weekend to celebrate your anniversary.

sentiment: Positive

Text: Walking in that neighbourhood is quite dangerous.

sentiment: Negative

Text: I love watching tennis all day long

sentimet:

And I can then leave the model to respond to the last text I entered. Typically 5 to 8 examples should be good enough for few-shot prompting. As you can guess by now, the drawback of this approach being that there will be too many tokens in your prompt. If you wish to start simple, you need not provide any examples, but jump straight to the problem like this prompt:Text: I love watching tennis all day long

sentimet:

This is zero-shot prompting where you don’t provide any examples but still expect the model to answer you properly. Typically while prompt engineering, you start with zero-shots as its simpler and based on the response, you move on to few-shots by providing examples to get better response.

Types of Prompts — roles

If you wish to jump to a specialised topic with the LLM, you can straightaway steer it to be an expert in a field by assigning it a role and this is called role prompting.

You would typically start the prompt with the expert role the LLM has to play. Then follow with the instructions for what it needs to do. As a simple example, the role could be asking the LLM to be a poet and the instruction could be to just write a poem about AI Bites. Or it could be slightly more complicated by asking the LLM to act as a linux terminal. And providing specific instructions to copy the first 10 lines of a file into a different file and to save it. You can even prevent it from including any other text in the output by explicitly mentioning not to give any explanation.You are a poet.

Write a poem about AI BitesAct as a linux terminal

I want you to provide the shell command to read the contents of a file named "input.txt".

Copy the first 10 lines to a different file with the name "new.txt" and save it.

Do not give any explanations.

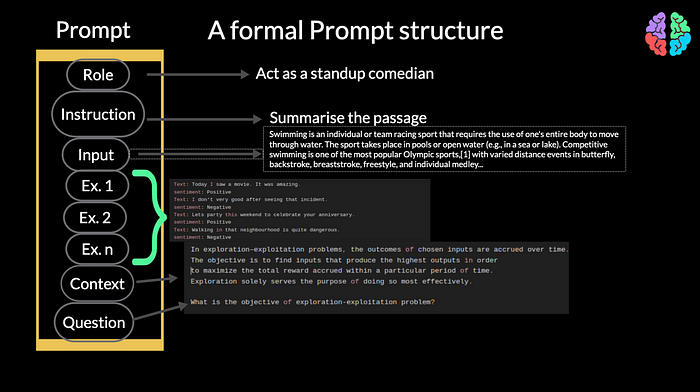

A formal Prompt structure

With all that said, if you want me to formalize the structure of a prompt, I would go about like this. A prompt typically starts with a role which the model has to play if your prompt is about a specialised topic. Then it can have any instructions you would like to give the LLM. On top of that if you wish to provide additional information to the LLM, it can also go after the instruction. Soon after that you can provide high quality examples if you are doing few-shot prompting. These examples can then be followed by any context you wish to provide to the model. If you wish to ask a question and do a q and A task, you can include your questions in the end

Prompt Formatting

Now that we have seen what constitutes a prompt, its even better if we know how to format these prompts. For example, its better to explicitly mention desired format and then actually provide the format.

Extract locations from the below text

Desired format:

Cities: <comma_separated_list_of_cities>

Countries: <comma_separated_list_of_countries>

Input: Although the exact age of Aleppo in Syria is unknown,

an ancient temple discovered in the city dates to around 3,000 B.C. Excavations in the

1990s unearthed evidence of 5,000 years of civilization,

dating Beirut, which is now Lebanon's capital, to around 3,000 B.C.

Similarly for input or context its better to say input followed by a colon and then provide your input.

When providing examples it is better to separate them with a couple of hashes like in this example.

##

Text: Today I saw a movie. It was amazing.

sentiment: Positive

##

Text: I don't very good after seeing that incident.

sentiment: Negative

##

If you are providing input, you may wrap it in quotes like this example:

Text: """

{text input here}

"""

Then there is something called the stop sequence which hints the model to stop churning out text because its finished with the output. You may choose a stop sequence with any symbol of your choice. But new line seems to be the usual option here.

Text: "Banana", Output: "yellow \\n"

Text: "Tomato", Output: "red \\n"

Text: "Apple", Output: "red \\n"

If you are working with say code generation, its better to provide comments according to the language you wish to see the output code to be generated in.

/*

Get the name of the use as input and print it

*/

# get the name of the user as input and print it

Watch the video

If you have read this far, I assume you either loved the article or are curious about AI. Eitherways, why don’t you watch our video on Prompt Engineering here. This is the first video in the series of videos about Prompting. So why not subscribe and stay tuned!

Conclusion

With all that introduction about prompts, prompt engineering and their types, we have only scratched the surface here. For example, how can we ask the LLM to reason about a given situation? There are more advanced ways to prompt like chain-of-thought, self-consistency, general knowledge, etc. Lets have a look at those in the upcoming posts and videos. Please stay tuned!